|

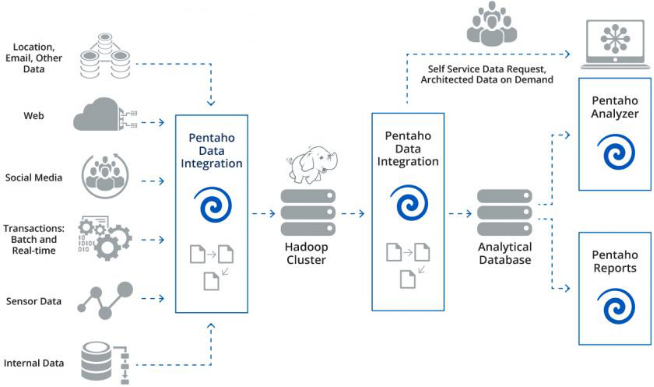

With outburst of data from web, mobile app, smart devices, social media, CRM and more, many organizations are facing challenges to extract value from the data being generated. Such wide availability of information is rarely stored in a single database. Analytics software and solutions help to provide a holistic view of a company’s operations based on analyzing diverse data sets which requires data integration as many systems communicate with each other and finding the data efficiency is a major challenge. Data integration is simply a process by which the information from multiple databases is united for use in a single application. In laymen’s terms, it combines parts that don’t normally fit together. Decision making requires accurate analytics, which requires accurate data integration to build dashboards, reports and visualizations that provides insights to the decision maker. If data is not cleaned, mined then it would result in queries returning useless apples-to-oranges comparisons. We require a data warehouse that can translate data across sources into a common format despite of the increase in volume and diversity of information. Information repository needs to be built up using ETL (extract, transform and load) process to ingest and clean up data on a scheduled basis, and delivers the ready-to-use information to the data warehouse. A java development company can create applications that access the data warehouse to find the information prepared for analysis. Also the need to create a strong digital footprint is needed through data integration. There are many types of data integration challenges like heterogeneity of data, isolated integration, Big bad data, which can be solved with its own unique solution. Pentaho is one of the solution which helps to deliver value faster and is scalable to meet the increasing data demands. Below are the reasons why Pentaho is ideally suited for Big Data Integration. 1. APACHE SPARK INTEGRATION - Pentaho can be easily integrated with Spark to help customers that want to incorporate this popular technology to: Lower the skills barrier for Spark, Coordinate, schedule, reuse, and manage Spark applications in data pipelines more easily and flexibly 2. METADATA INJECTION – It simply on boards multiple data types to dynamically generate PDI transformations at runtime instead of having to hand-code each data source, reducing costs by 10X. 3. HADOOP DATA SECURITY INTEGRATIONS - Securing big data environments is extremely difficult because the technologies that define authentication and access are continuously evolving. Pentaho expands its Hadoop data security integration to promote better big data governance, protecting clusters from intruders. 4. APACHE KAFKA SUPPORT - Apache Kafka’s increasingly popularity to publish/subscribe messaging system and handling large data volumes is common in today’s big data and IoT solutions. Pentaho now provides Enterprise customer support to send and receive data from Kafka, to facilitate continuous data processing use cases in PDI. 5. ENHANCED SUPPORT FOR POPULAR HADOOP FILE FORMATS - Pentaho now supports the output of files in Avro and Parquet formats in PDI, both popular for storing data in Hadoop in big data onboarding use cases. Pentaho is part of Hitachi Group company, and is a leading data integration and business analytics company with an enterprise-class, open source-based platform for diverse big data deployments. Pentaho’s unified data integration and analytics platform is comprehensive, completely embeddable and delivers governed data to power any analytics in any environment. Have you used Pentaho before to solve the data integration issues? Feel free to leave them in the comments. Your comment will be posted after it is approved.

Leave a Reply. |

Archives

December 2017

Categories

All

|

RSS Feed

RSS Feed